RESEARCH

Evaluating the Soundness of Security Metrics from Vulnerability Scoring Frameworks

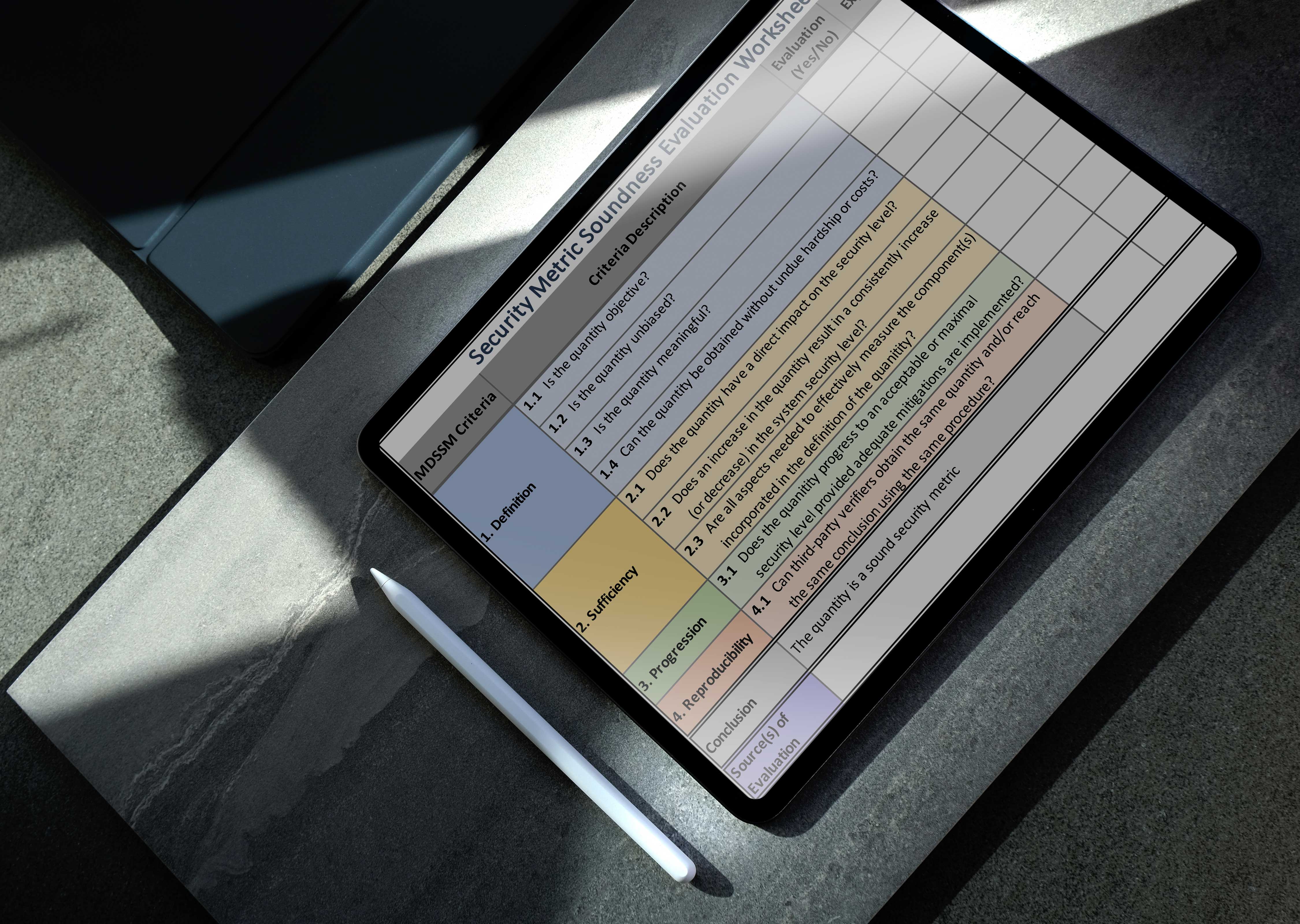

Over the years, a number of vulnerability scoring frameworks have been proposed to characterize the severity of known vulnerabilities in software-dependent systems. These frameworks provide security metrics to support decision-making in system development and security evaluation and assurance activities. When used in this context, it is imperative that these security metrics be sound, meaning that they can be consistently measured in a reproducible, objective, and unbiased fashion while providing contextually relevant, actionable information for decision makers. In this paper, we evaluate the soundness of the security metrics obtained via several vulnerability scoring frameworks. The evaluation is based on the Method for Designing Sound Security Metrics (MDSSM). We also present several recommendations to improve vulnerability scoring frameworks to yield more sound security metrics to support the development of secure software-dependent systems.

Key Takeaways

01. Context

To effectively manage a system's security, we need security metrics that are objective, meaningful, consistent, and dynamic. Such metrics can help in characterizing and managing risks by enabling effective decision-making to optimize resources and prioritize mitigation efforts. Over the years, a number of vulnerability scoring frameworks have proposed security metrics to support decision-making in system development and security evaluation and assurance activities. These recommendations can enable better decision-making based on sound science which can improve security assurance for critical software-dependent systems.

02. Problem

Over the years, a number of vulnerability scoring frameworks aiming to estimate the severity of known vulnerabilities in software-dependent systems have been proposed. Each of these frameworks establish a security metric to support decision-making in system development and security evaluation and assurance activities. While such frameworks have been widely used to characterize the severity of vulnerabilities, the soundness of their associated security metrics has yet to be formally evaluated.

03. Solution

We evaluate five vulnerability scoring frameworks reported in the literature that have found widespread use in security decision-making processes or that have been proposed for such purposes. Based on the results, we provide several recommendations to help developers, evaluators, and certifiers apply the frameworks in ways that can lead to obtaining sound security metrics. These recommendations can enable better decision-making based on sound science which can improve security assurance for critical software-dependent systems.

04. Impact

Our results show that four of the five frameworks considered in our evaluation yield security metrics that are not sound. Relying on unsound metrics when making security-related decisions about software-dependent systems is problematic and raises questions as to whether decisions based on these metrics are justifiable and/or acceptable. These questions can subsequently impact security evaluation and assurance activities for such systems. We also provided several recommendations that can help to improve and/or remedy the issues in the evaluated frameworks and that should be considered in the development of new frameworks to guarantee the soundness of such metrics.